Marketing Cloud Intelligence (formerly known, and forever in my heart, as Datorama) is a deep platform for marketers who have Salesforce in their tech stack. It’s a robust tool for optimizing data across various channels, enabling you to track spend, engagement, and conversion data, among other options. If you’ve landed on this page, I assume that you already have a working knowledge of the Intelligence platform. Now, let’s take your skills to the next level with insights you didn’t even know you needed to unlock greater insights through Intelligence formulas.

We also recommend you check out this blog post to understand how you can get more from your Intelligence implementation through an audit.

Let’s dive in.

What’s on the Horizon?

In this blog post, we’re going beyond the basics of your standard Trailhead module. Our journey will uncover hidden Intelligence formula secrets in the following areas:

- Formula Syntax with JavaScript

- Parsing Dates

- Referencing CSV/Data Model Fields and When to Use Each Syntax

- Formula Fears – Goodbye!

Intelligence Formula Syntax with JavaScript

Let’s start on some behind-the-scenes basics to give everyone a little bit more working knowledge, and then we’ll hit some deep cuts.

You’ve probably wondered what governs the formulas in the Intelligence platform that somehow you can write Excel-esque statements but also do JavaScript work (more on this in a moment). Basically, the platform was programmed to contain Excel-like formulas while also allowing for MVEL, a Java-based language, to do some variations of formula work that can work a little differently in processing and possibility than Excel formulas.

You can read more of an overview here directly from Salesforce on the governance of formulas and the basics to fiddle with, and below you’ll get my deeper cuts that don’t really get elaborated on anywhere I can find, officially.

The Writer’s Strike did not affect this (Java) Script

There are two common experiences I’ve had for the last five years when it comes to if statements:

- Someone implemented the platform and used JavaScript language, and no one still on the team understands how to read or manipulate the formula.

- Someone made an unruly Excel-like IF(condition, true, false) statement and it’s become unwieldy.

Luckily, I have your fix, and I’ll give a brief why on this too, beyond the notes above — I want to introduce everyone to using lowercase ‘ifs.’ Let’s take the below-calculated dimension (there would be no difference if this was setup in mapping of a data stream, to be clear).

- We define our first if statement — in a lowercase if context, you just do if() for the first line. Unlike in EXCEL IFs (henceforth capital IFs), you do not need to define a false condition, just a true condition (in this case, if the campaign name contains ‘Facebook’).

- If our first condition is true, ‘Facebook’ will be the value returned, as defined by line 2. This is defined by the squiggly brackets {} and the defined value is ended with a semicolon {‘Facebook;’}

*This is an exciting performance element that adds up across large statements, and especially if the value is in a calculated field, which loads in real time, not before a page is loaded. If the statement finds a true match, it doesn’t run through every line of the code, it just stops and computes the next value. An uppercase IF statement, on the other hand, would check through every true/false possibility and then load the next row, which en masse could make a performance difference.

- Else if defines some other condition to check for specifically. If you wanted a simple true/false, you could skip straight to the else statement on line five. But for example’s sake, we will assume there’s an else if. This is effectively how you can nest ifs. As noted, the platform stops checking as soon as it hits a true value, so you want to be mindful that you stack this accordingly in your checking if multiple conditions could be true.

- Once again, you return a value if true on line 4, no variation in formatting to the first true value.

- Finally, we close by doing a return value with the word “else”, indicating for all other conditions we close off here.

This is about as far as you need to know for JavaScript usage in Intelligence. But if you’re making deeply complex conditional formatting, this will hopefully make the process much cleaner and decipherable for you!

Parsing Parsedates

Magic letters you should write down, and yes this probably looks nonsensical before my description, but roll with it: “EEE MMM dd hh:mm:ss zzz yyyy”

This is your fix to one of two likely parsedate situations I have seen regularly. It’s a string that dates frequently get passed into Intelligence as, and you inexplicably get a “cannot parse data” error in your mapping. This is infuriating. The formula in full that you are probably looking for is PARSEDATE(csv[insert field name here], EEE MMM dd hh:mm:ss zzz yyyy”).

I’ll also note here that this is likely what is being pushed into the platform, even if you see something different in your Excel file/csv file from an Intelligence log (highlighted below from the data streams list in Connect & Mix, in case you need guidance on how to find your log files). You should, anytime you get this error, open your log files using a note program (Sublime Text was my go-to for years as a Windows user for bigger files, though for most people programs like notepad will work just fine). Even if not the above format, you can see definitively (with your columns instead separated and broken out by commas, hence the term csv, comma-separated values) what format your dates come in as (and the magic of a program like Excel to just know how a human reads this data cleanly).

There’s also an error I’ve gotten numerous times in platform and have helped people with but have not been unfortunate enough to encounter recently myself, so I am approximating here with a known fix instead. This happens entirely in calculated dimensions, and it effectively amounts to “Unparseable date: 1234”. This will fully stop you from saving a calculated dimension and it’s infuriating. My fix:

At the top of your formula, set a condition: if([insert field here, likely day but whatever the error tells you] == 1234 )

{‘error’}

else if… and continue on with your calculated dimension as planned

*note: if this does not work, you may also want to try “1234” instead of 1234, as a string of text instead of as a number, depending how the platform is reading the problematic value.

csv verses Dat

Through the course of the above pieces of guidance, you may have noticed something: in my calculated if example, there were yellow highlighted fields simply called “Campaign_Name,” and in my examples referring to mapping, I surrounded fields with the language of “csv[field name]”. Why would I do that to you (the answer is not that I am cruel and seek to confuse you further as I write this blog, I promise)?!?! Well, it’s because there are three variations of referencing fields in Intelligence.

- When you map data, the csv syntax is needed to establish we expect a column from the inbound file. Even if you use Excel format, tsvs, pdfs, etc., this context will always be referred to as csv in your mapping formulas to establish a recurring column. Commonly this looks like the below, and is probably not something you’ve thought about a lot.

- So you may be wondering, if this is so commonplace, why even explain it? Surely the easy explanation is that csv appears in mapping, and the highlighted field appears in calculated dimensions. Well, kind of, yes. There’s a twist coming below, but yes in calculated dimensions, because you are operating outside of a data stream and inclusive of your whole workspace, you get the highlighted yellow names, showcased again below, to indicate this is a field in the platform.

- It’s important to understand those two variations because the third is a marriage of them: referencing data stream fields, not source file columns, in data stream mapping. I have very rarely seen any use of this outside of Vlookups, but for that case alone I’ll highlight what this does: it notes that a value is meant to reference an already existing data point in platform. In the context of a vlookup below, you can see the reason for differentiating these items.

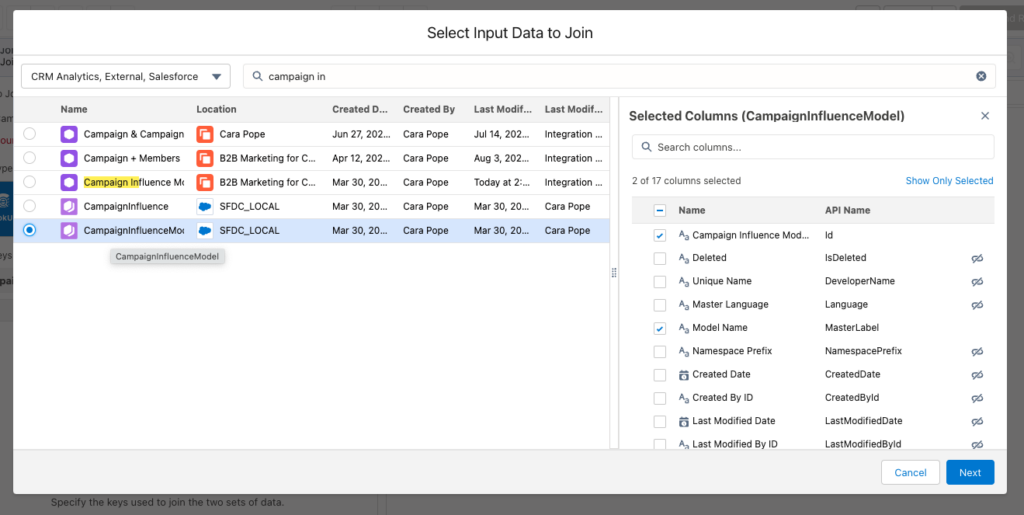

We have a csv field we reference at every ingestion of data, which we then use to look into first our campaign advertiser data already in the platform, and return us the associated campaign name, also already in the platform. You can see the data model section of the formula editor below, where these existing data model fields can be referenced in mapping.

Else…

Hopefully, these tips have been helpful and can act as an easy cheat sheet as you use Intelligence going forward. Whether it’s using if statements, parsing dates, or understanding how to reference different types of fields, you hopefully found some new information today to help make you better at the platform (I won’t call you a Dato-dork yet, but I happily will wear that cap and keep trying to take more of you with me)!

Keep an eye out for more in this series. We look forward to growing your Intelligence!

Remember to drop us a line when you’re ready to realize the full potential of your Intelligence implementation and how it fits in with your overall marketing strategy.