Okay, before people start clutching their pearls, let me clarify…

A/B tests are not a waste of time. But bad ones are. And there are a lot of bad ones.

Pardot makes it easy to run A/B tests on things like subject lines, body copy, color, images and more (in Pro edition or higher). But the ease of configuring these tests in the system does not make them easy to do right.

Your time is precious. If you’re running A/B tests and collecting very little and/or slightly pointless data, you’re expending valuable energy that could be leveraged elsewhere.

Like doing fun Pardot things that actually do have an impact. Or napping.

What good email marketing A/B tests look like

Well run A/B tests have a few things in common:

- They test a meaningful hypothesis

- They collect enough data to make a decision on

- They isolate one variable

- They’re executed successfully

- They’re interpreted successfully

Simple in theory. For digital marketers with a budget and a gazillion things on their plates though, there are a lot of small ways to get tripped up.

Here are times when you should skip the A/B test — and not feel bad about.

1. If the audience isn’t big enough

Let’s say you run an A/B test, and twice as many people click through on Version B. We have a clear winner, right?

It seems like a simple yes, but there’s some nuance here. The real answer is something more like “um, maybe.”

The size of the overall send and the difference in the two response rates determine whether your results are statistically significant – aka your threshold for “sure enough that you should take action on it.”

Kissmetrics has a free tool that is my go-to for determining the actionability™ of A/B test data and determining next steps.

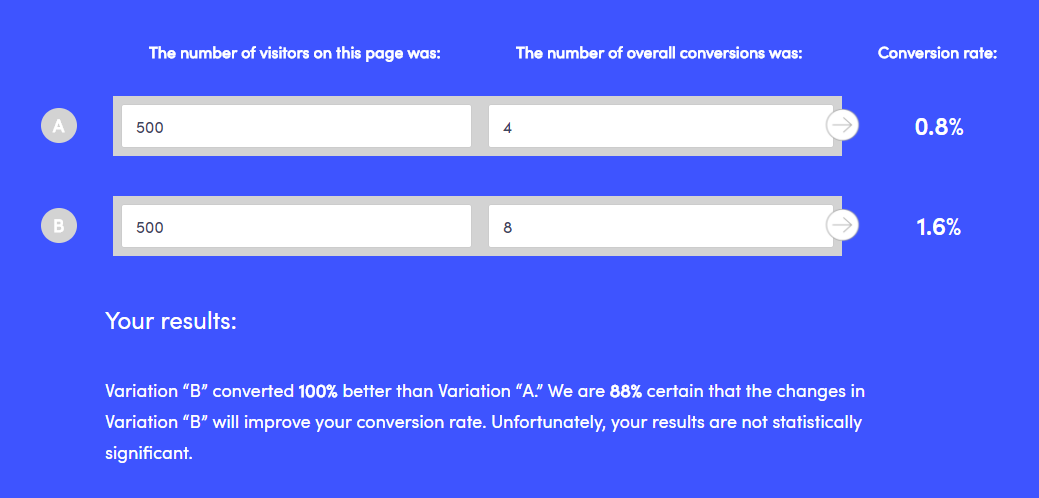

For example, if we take the 2X improvement mentioned above for a sample size of 1,000, we see that it doesn’t pass the smell test:

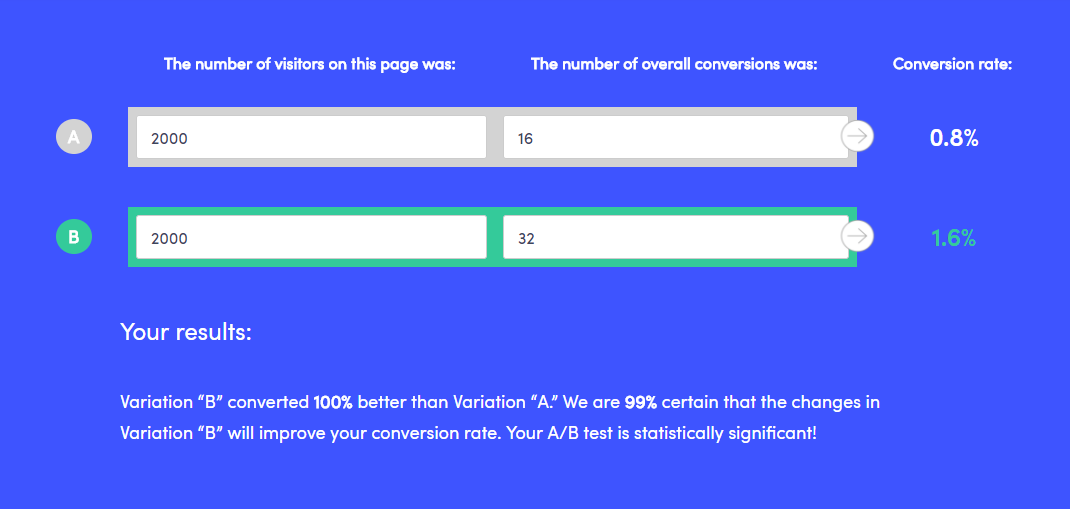

With a larger sample and the same conversion rates, though, we get a different result:

The bottom line: before you commit to a pivot in strategy based on an A/B “winner,” make your numbers are truly meaningful.

2. If it compares apples to oranges

If Email A is radically different from Email B, and you do have a statistically significant result – then what?

What was it that caused your audience to respond better to Email A? If you have too many variables, it’s impossible to say. Try changing ONE thing in each test, and iterating as you go on .

Examples of things to vary could include:

- Call to action verbiage (like “RSVP” vs. “Save Your Seat”)

- Email layout (one column vs. two column, image placement, etc.)

- Personalization options – (i.e. “Hi Jon” vs. “Dear Mr. Snow”)

- Headline

- Subject line

- Pre-header text

- Different offers / discount terms (i.e. 20% off vs. get 2 months free)

3. If the clock is ticking

Is your email time sensitive, or do you have other messages planned to that same list segment? If so, it might not be a great candidate for A/B testing.

Pardot lets us pick what percentage of the list we want to test and how long we want to test before declaring a “winner” and sending to the remainder of our contacts. I’ve worked with several clients who A/B test all of their emails, but then when you go back and look at the results, a different “winner” would have been selected if they would have given their test a bit more time.

If you can, give your test a full 24 hours. Data from MailerMailer indicates that it takes people longer to open messages that one might expect. Here’s their breakdown of when emails were actually opened:

- 13.7% within the first hour of sending

- 54% within the first 5 hours of sending

- 83% within the first 24 hours of sending

- 90% within 2 days

- 100% within about 2 weeks

Apparently, not all of us are checking our phone with Pavolvian compulsion during meetings. Maybe your audience is, though – so consider analyzing your Pardot data to try to understand how long your audience tends to open/click through on your emails following a send.

You can see this info under list email reports:

If you can’t give your email test 24 hours (because you’re promoting a super special secret flash sale that ends at midnight or something like that) then give it as long as you can.

How late can you declare a “winner” and send the remainder without your email being untimely or overlapping with other planned sends?

4. If you don’t plan to do anything with the data

“Oh B won? Cool.”

Don’t A/B test for the sake of A/B testing. It’s a completely useless exercise if you don’t do anything with the information you’re gathering.

If you don’t have a hypothesis or a plan to act on the results of your A/B test… then don’t do it.

The net impact is that you’re adding a lot of time to your process and not learning that much.

We each have limited number of hours in our day, so give yourself permission to do something other than fussing over A/B variations whose outcome you’re apathetic about.

How do you do A/B testing in Pardot?

Over to you — how are you leveraging A/B testing in your digital marketing, particularly with Pardot? What’s worked, and what’s tripped you up?

Share in the comments!